Pepr

What happened to Pepr’s stars?

In February 2025, an accidental change to the repository’s visibility reset the star count.

The visibility issue was quickly resolved, but the stars were unfortunately lost.

Pepr had over 200 stars, demonstrating its recognition and value within the Kubernetes community.

We’re working to rebuild that recognition.

If you’ve previously starred Pepr, or if you find it a useful project, we would greatly appreciate it if you could re-star the repository.

We really appreciate your support! :star:

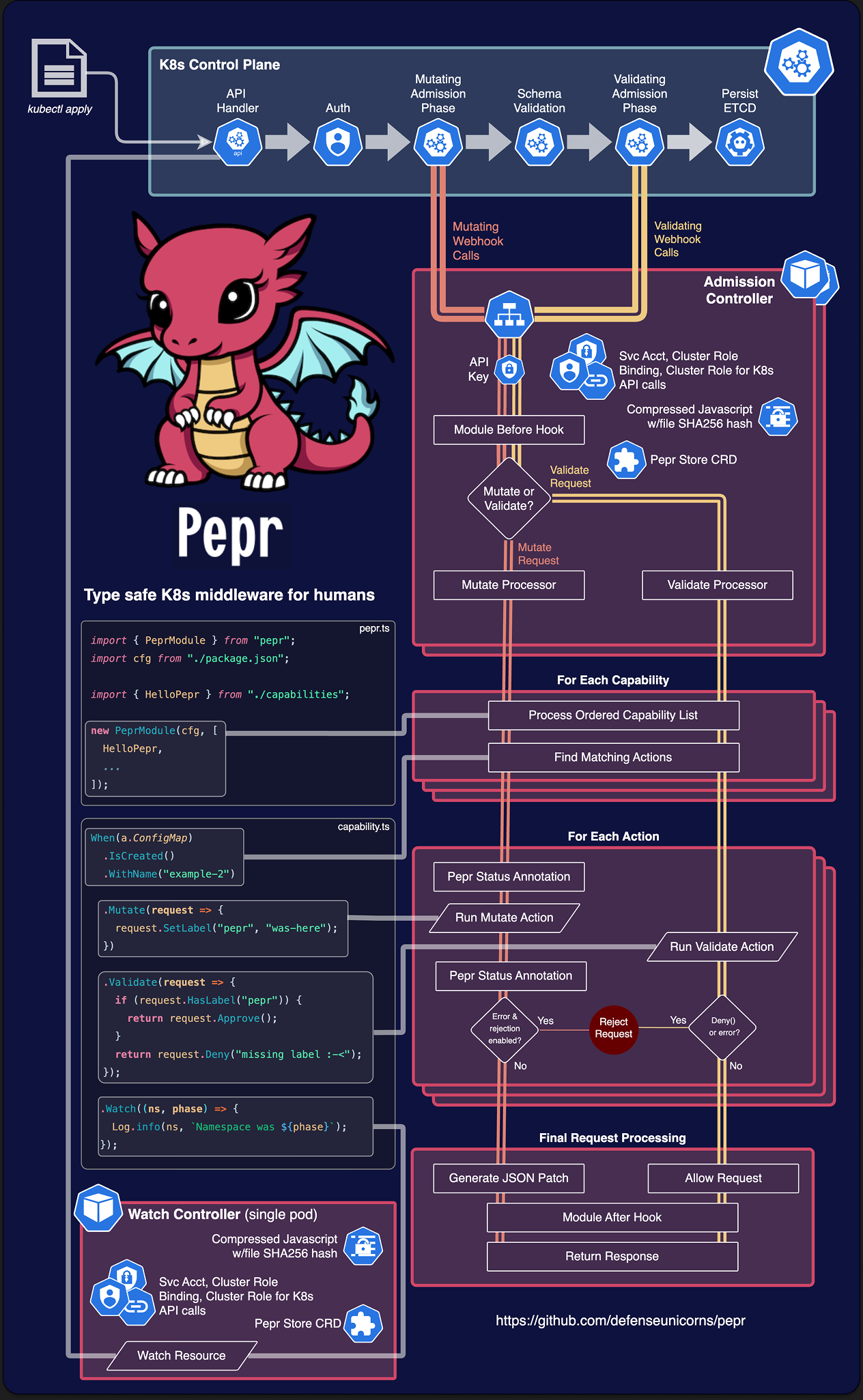

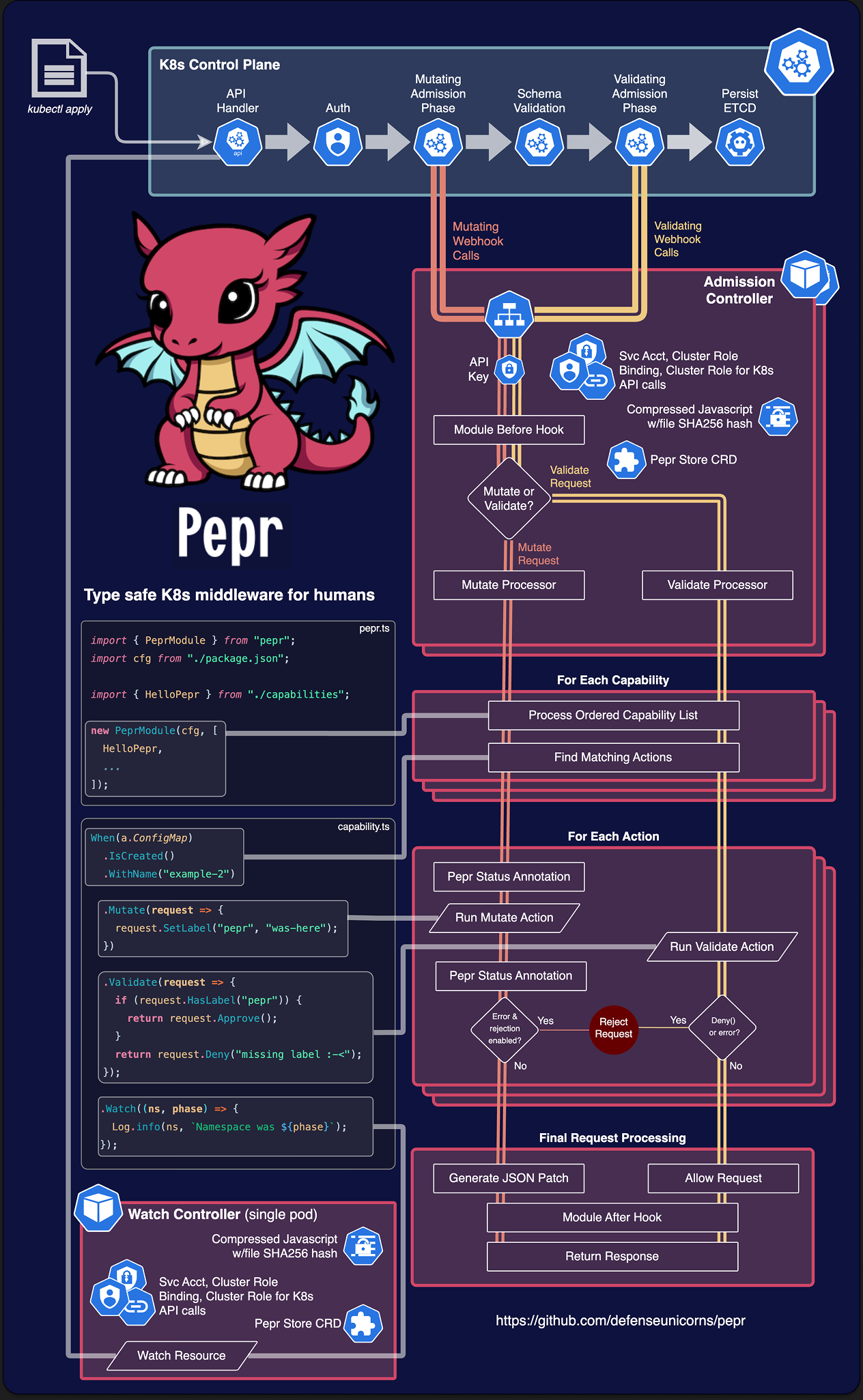

Type safe Kubernetes middleware for humans

Pepr simplifies Kubernetes management by providing an alternative to complex YAML configurations, custom scripts, and ad-hoc solutions.

As a Kubernetes controller, Pepr enables you to define Kubernetes transformations using TypeScript, accessible through straightforward configurations even without extensive development expertise.

Pepr transforms disparate implementation approaches into a cohesive, well-structured, and maintainable system.

With Pepr, you can efficiently convert organizational knowledge into code, improving documentation, testing, validation, and change management for more predictable outcomes.

Features

- Zero-config K8s Mutating and Validating Webhooks plus Controller generation

- Automatic leader-elected K8s resource watching

- Lightweight async key-value store backed by K8s for stateful operations with the Pepr Store

- Human-readable fluent API for generating Pepr Capabilities

- A fluent API for creating/modifying/watching and server-side applying K8s resources via Kubernetes Fluent Client

- Generate new K8s resources based off of cluster resource changes

- Perform other exec/API calls based off of cluster resources changes or any other arbitrary schedule

- Out of the box airgap support with Zarf

- Entire NPM ecosystem available for advanced operations

- Realtime K8s debugging system for testing/reacting to cluster changes

- Controller network isolation and tamper-resistant module execution

- Least-privilege RBAC generation

- AMD64 and ARM64 support

Example Pepr Action

This quick sample shows how to react to a ConfigMap being created or updated in the cluster.

It adds a label and annotation to the ConfigMap and adds some data to the ConfigMap.

It also creates a Validating Webhook to make sure the “pepr” label still exists.

Finally, after the ConfigMap is created, it logs a message to the Pepr controller and creates or updates a separate ConfigMap with the kubernetes-fluent-client using server-side apply.

For more details see actions section.

When(a.ConfigMap)

.IsCreatedOrUpdated()

.InNamespace("pepr-demo")

.WithLabel("example", "value")

// Create a Mutate Action for the ConfigMap

.Mutate(request => {

// Add a label and annotation to the ConfigMap

request.SetLabel("pepr", "was-here").SetAnnotation("pepr.dev", "annotations-work-too");

// Add some data to the ConfigMap

request.Raw.data["doug-says"] = "Pepr is awesome!";

// Log a message to the Pepr controller logs

Log.info("A ConfigMap was created or updated:");

})

// Create a Validate Action for the ConfigMap

.Validate(request => {

// Validate the ConfigMap has a specific label

if (request.HasLabel("pepr")) {

return request.Approve();

}

// Reject the ConfigMap if it doesn't have the label

return request.Deny("ConfigMap must have the required pepr label");

})

// Watch behaves like controller-runtime's Manager.Watch()

.Watch(async (cm, phase) => {

Log.info(cm, `ConfigMap was ${phase}.`);

// Apply a ConfigMap using K8s server-side apply (will create or update)

await K8s(kind.ConfigMap).Apply({

metadata: {

name: "pepr-ssa-demo",

namespace: "pepr-demo-2",

},

data: {

uid: cm.metadata.uid,

},

});

});

Prerequisites

Quick Start Guide

# Create a new Pepr Module

npx pepr init

# If you already have a K3d cluster you want to use, skip this step

npm run k3d-setup

# Start playing with Pepr now!

# If using Kind, or another local k8s distro instead,

# run `npx pepr dev --host <your_hostname>`

npx pepr dev

kubectl apply -f capabilities/hello-pepr.samples.yaml

[!TIP]

Don’t use IP as your --host, it’s not supported. Make sure to check your

local k8s distro documentation how to reach your localhost, which is where

pepr dev is serving the code from.

«video class=“td-content” controls src=“https://user-images.githubusercontent.com/882485/230895880-c5623077-f811-4870-bb9f-9bb8e5edc118.mp4">>

Concepts

Module

A module is the top-level collection of capabilities.

It is a single, complete TypeScript project that includes an entry point to load all the configuration and capabilities, along with their actions.

During the Pepr build process, each module produces a unique Kubernetes MutatingWebhookConfiguration and ValidatingWebhookConfiguration, along with a secret containing the transpiled and compressed TypeScript code.

The webhooks and secret are deployed into the Kubernetes cluster with their own isolated controller.

See Module for more details.

Capability

A capability is set of related actions that work together to achieve a specific transformation or operation on Kubernetes resources.

Capabilities are user-defined and can include one or more actions.

They are defined within a Pepr module and can be used in both MutatingWebhookConfigurations and ValidatingWebhookConfigurations.

A Capability can have a specific scope, such as mutating or validating, and can be reused in multiple Pepr modules.

See Capabilities for more details.

Action

Action is a discrete set of behaviors defined in a single function that acts on a given Kubernetes GroupVersionKind (GVK) passed in from Kubernetes.

Actions are the atomic operations that are performed on Kubernetes resources by Pepr.

For example, an action could be responsible for adding a specific label to a Kubernetes resource, or for modifying a specific field in a resource’s metadata.

Actions can be grouped together within a Capability to provide a more comprehensive set of operations that can be performed on Kubernetes resources.

There are both Mutate() and Validate() Actions that can be used to modify or validate Kubernetes resources within the admission controller lifecycle.

There are also Watch() and Reconcile() actions that can be used to watch for changes to Kubernetes resources that already exist.

Finally, the Finalize() can be used after Watch() or Reconcile() to perform cleanup operations when the resource is deleted.

See actions for more details.

Logical Pepr Flow

TypeScript

TypeScript is a strongly typed, object-oriented programming language built on top of JavaScript.

It provides optional static typing and a rich type system, allowing developers to write more robust code.

TypeScript is transpiled to JavaScript, enabling it to run in any environment that supports JavaScript.

Pepr allows you to use JavaScript or TypeScript to write capabilities, but TypeScript is recommended for its type safety and rich type system.

See the Typescript docs to learn more.

To join our channel go to Kubernetes Slack and join the #pepr channel.

Made with contrib.rocks.

1 -

Community and Support

Introduction

Pepr is a community-driven project. We welcome contributions of all kinds, from bug reports to feature requests to code changes. We also welcome contributions of documentation, tutorials, and examples.

Contributing

You can find all the details on contributing to Pepr at:

Reporting Bugs

Information on reporting bugs can be found at:

Reporting Security Issues

Information on reporting security issues can be found at:

2 -

Contributor Guide

Thank you for your interest in contributing to Pepr! We welcome all contributions and are grateful for your help. This guide outlines how to get started with contributing to this project.

Table of Contents

Code of Conduct

Please follow our Code of Conduct to maintain a respectful and collaborative environment.

Getting Started

Setup

- Fork the repository.

- Clone your fork locally:

git clone https://github.com/your-username/pepr.git. - Install dependencies:

npm ci. - Create a new branch for your feature or fix:

git checkout -b my-feature-branch.

Kubernetes Fluent Client Contributions

Kubernetes Fluent Client is a library used by Pepr that provides a fluent interface for Kubernetes API clients. In Pepr, we use the kubernetes-fluent-client package to interact with Kubernetes resources. Due to the nature of this library, it is important to ensure that any changes made to the Kubernetes Fluent Client are thoroughly tested and validated in Pepr before being merged into the codebase. In particular, we need to ensure that the changes do not break any existing functionality or introduce new bugs, especially in the context of the Pepr Watcher.

If you are making changes to the Kubernetes Fluent Client, please ensure that you run the Soak Test in GitHub Actions to validate your changes.

To run the Soak Test, you can do the following:

- Go to the GitHub Actions tab

- Select the workflow named “Soak Test”

- Click on the “Run workflow” button to get the options to run the workflow

- Select the Kubernetes Fluent Client branch you want to test (

KFC dev branch) and click “Run workflow”

Submitting a Pull Request

- Create an Issue: For significant changes, please create an issue first, describing the problem or feature proposal. Trivial fixes do not require an issue.

- Commit Your Changes: Make your changes and commit them. All commits must be signed.

- Run Tests: Ensure that your changes pass all tests by running

npm test. - Push Your Branch: Push your branch to your fork on GitHub.

- Create a Pull Request: Open a pull request against the

main branch of the Pepr repository. Please make sure that your PR passes all CI checks.

PR Requirements

- PRs must be against the

main branch. - PRs must pass CI checks.

- All commits must be signed.

- PRs should have a related issue, except for trivial fixes.

We take PR reviews seriously and strive to provide a great contributor experience with timely feedback. To help maintain this, we ask external contributors to limit themselves to no more than two open PRs at a time. Having too many open PRs can slow down the review process and impact the quality of feedback

Coding Guidelines

Please follow the coding conventions and style used in the project. Use ESLint and Prettier for linting and formatting:

- Check formatting:

npm run format:check - Fix formatting:

npm run format:fix - If regex is used, provide a link to regex101.com with an explanation of the regex pattern.

- Do not use emoji in logs or comments, as it can be distracting and is not consistent with the project’s style.

Git Hooks

- This project uses husky to manage git hooks for pre-commit and pre-push actions.

- pre-commit will automatically run linters so that you don’t need to remember to run

npm run format:* commands - pre-push will warn you if you’ve changed lots of lines on a branch and encourage you to optionally present the changes as several smaller PRs to facilitate easier PR reviews.

- The pre-push hook is an opinionated way of working, and is therefore optional.

- You can opt-in to using the pre-push hook by setting

PEPR_HOOK_OPT_IN=1 as an environment variable.

Running Tests

Run Tests Locally

Test a Local Development Version

- Run

npm test and wait for completion. - Change to the test module directory:

cd pepr-test-module. - You can now run any of the

npx pepr commands.

Running Development Version Locally

- Run

npm run build to build the package. - For running modified pepr, you have two options:

- Using

npx ts-node ./src/cli.ts init to run the modified code directly, without installing it locally.

You’ll need to also run npx link <your_dev_pepr_location> inside your pepr module, to link to the

development version of pepr. - Install the pre-build package with

npm install pepr-0.0.0-development.tgz.

You’ll need to re-run the installation after every build, though.

- Run

npx pepr dev inside your module’s directory to run the modified version of pepr.

[!TIP]

Make sure to re-run npm run build after you modify any of the pepr source files.

For any questions or concerns, please open an issue on GitHub or contact the maintainers.

3 -

Pepr Best Practices

Table of Contents

High Availability

Pepr is designed to be deployed in a high availability (HA) configuration. This means that you can run multiple instances of the Pepr Admission Controller in different availability zones or regions to ensure that your Pepr deployment is resilient to failures. By default, the Admission Controller is deployed with two replicas.

For modules that have a failurePolicy of Fail, it is recommended to opt into podAntiAffinity in the helm chart by setting admission.antiAffinity to true in the values.yaml of the helm chart generated after running npx pepr build. This ensures the pods are scheduled on different nodes, providing better fault tolerance for when nodes are unavailable. Modules running on edge devices or single node environments should keep admission.antiAffinity set to false to avoid scheduling issues.

admission:

antiAffinity: true

Due to the nature of having two different instances of the Admission Controller, it is important to ensure that the modules are idempotent and do not store ephemeral data since there is no guarantee which replica will serve the request. When state must be saved, use the PeprStore.

Mutating Webhook Errors

When developing mutating admission policies, it is essential to include a validation step immediately after applying mutations. This ensures that the changes made by the mutating admission policy were applied correctly and do not introduce unintended inconsistencies or invalid configurations into your Kubernetes cluster.

Why Validate After Mutating?

- Detect Misconfigurations Early:

Mutating admission policies modify incoming resource configurations dynamically. Without validation, you risk introducing invalid configurations into your cluster if the mutation logic contains bugs, unintended side effects, or runs too long and causes a Webhook Timeout.

- Maintain Cluster Integrity:

By validating the mutated resource, you ensure it adheres to expected formats, standards, and constraints, maintaining the health and stability of your cluster.

- Catch Logic Errors in Mutations:

A mutation may not always produce the intended output due to edge cases, unexpected inputs, or incorrect assumptions in the mutation logic.

Validation helps catch such issues early and becomes particularly important if your Module is configured to use a Webhook failurePolicy of ignore. In that case, admission requests failures won’t prevent further processing and/or acceptance of mutate-failed requests and could result in undesirable resources getting into your cluster!

- Comply with Kubernetes Best Practices:

Kubernetes resources must meet specific structural and functional requirements. Validating ensures compliance, preventing the risk of deployment failures or runtime errors.

How to implement a Validate-After-Mutate Pattern

- Apply the desired transformations to the resource in the

Mutate block. - Validate the mutated resource in the

Validate block to ensure it adheres to the expected structure. - If the validation fails, reject the resource with a descriptive message explaining the issue.

When(a.Pod)

.IsCreated()

.InNamespace("my-app")

.WithName("database")

.Mutate(po => po.SetLabel("pepr", "true"))

.Validate(po => {

if (po.Raw.metadata?.labels["pepr"] !== "true") {

return po.Approve();

}

return po.Deny("Needs pepr label set to true")

});

Core Development

When developing new features in Pepr Core, it is recommended to use npx pepr deploy --image pepr:dev, which will deploy Pepr’s Kubernetes manifests to the cluster with the development image. This will allow you to test your changes without having to build a new image and push it to a registry.

The workflow for developing features in Pepr is:

- Run

npm test which will create a k3d cluster and build a development image called pepr:dev - Deploy development image into the cluster with

npx pepr deploy --image pepr:dev

Debugging

Pepr is composed of Modules, Capabilities, and Actions:

- Actions are the blocks of code containing filters,

Mutate, Validate, Watch, Reconcile, and OnSchedule. - Capabilities such as

hello-pepr.ts. Modules are the result of npx pepr init. You can have as many Capabilities as you would like in a Module.

Pepr is a webhook-based system, meaning it is event-driven.

When a resource is created, updated, or deleted, Pepr is called to perform the actions you have defined in your Capabilities.

It’s common for multiple webhooks to exist in a cluster, not just Pepr.

When there are multiple webhooks, the order in which they are called is not guaranteed.

The only guarantee is that all of the MutatingWebhooks will be called before all of the ValidatingWebhooks.

After the admission webhooks are called, the Watch and Reconcile are called.

The Reconcile and Watch create a watch on the resources specified in the When block and are watched for changes after admission.

The difference between reconcile and watch is that Reconcile processes events in a queue to guarantee that the events are processed in order where as watch does not.

Considering that many webhooks may be modifying the same resource, it is best practice to validate the resource after mutations are made to ensure that the resource is in a valid state if it has been changed since the last mutation.

When(a.Pod)

.IsCreated()

.InNamespace("my-app")

.WithName("database")

.Mutate(pod => {

pod.metadata.labels["pepr"] = "true";

return pod;

})

// another mutating webhook could removed labels

.Validate(pod => {

if (pod.metadata.labels["pepr"] !== "true") {

return pod.Approve("Label 'pepr' must be 'true'");

}

return pod.Deny("Needs pepr label set to true")

});

If you think your Webhook is not being called for a given resource, check the *WebhookConfiguration.

Debugging During Module Development

Pepr supports breakpoints in the VSCode editor. To use breakpoints, run npx pepr dev in the root of a Pepr module using a JavaScript Debug Terminal. This command starts the Pepr development server running at localhost:3000 with the *WebhookConfiguration configured to send AdmissionRequest objects to the local address.

This allows you to set breakpoints in Mutate(), Validate(), Reconcile(), Watch() or OnSchedule() and step through module code.

Note that you will need a cluster running:

k3d cluster create pepr-dev --k3s-arg '--debug@server:0' --wait

When(a.Pod)

.IsCreated()

.InNamespace("my-app")

.WithName("database")

.Mutate(pod => {

// Set a breakpoint here

pod.metadata.labels["pepr"] = "true";

return pod;

})

.Validate(pod => {

// Set a breakpoint here

if (pod.metadata.labels["pepr"] !== "true") {

return ["Label 'pepr' must be 'true'"];

}

});

Logging

Pepr can deploy two types of controllers: Admission and Watch. The controllers deployed are dictated by the Actions called for by a given set of Capabilities (Pepr only deploys what is necessary). Within those controllers, the default log level is info but that can be changed to debug by setting the LOG_LEVEL environment variable to debug.

To pull logs for all controller pods:

kubectl logs -l app -n pepr-system

Admission Controller

If the focus of the debug is on a Mutate() or Validate(), the relevant logs will be from pods with label pepr.dev/controller: admission.

kubectl logs -l pepr.dev/controller=admission -n pepr-system

More refined admission logs – which can be optionally filtered by the module UUID – can be obtained with npx pepr monitor

Watch Controller

If the focus of the debug is a Watch(), Reconcile(), or OnSchedule(), look for logs from pods containing label pepr.dev/controller: watcher.

kubectl logs -l pepr.dev/controller=watcher -n pepr-system

Internal Error Occurred

Error from server (InternalError): Internal error occurred: failed calling webhook "<pepr_module>pepr.dev": failed to call webhook: Post ...

When an internal error occurs, check the deployed *WebhookConfiguration resources’ timeout and failurePolicy configurations. If the failurePolicy is set to Fail, and a request cannot be processed within the timeout, that request will be rejected. If the failurePolicy is set to Ignore, given the same timeout conditions, the request will (perhaps surprisingly) be allowed to continue.

If you have a validating webhook, the recommended is to set the failurePolicy to Fail to ensure that the request is rejected if the webhook fails.

failurePolicy: Fail

matchPolicy: Equivalent

timeoutSeconds: 3

The failurePolicy and timeouts can be set in the Module’s package.json file, under the pepr configuration key. If changed, the settings will be reflected in the *WebhookConfiguration after the next build:

"pepr": {

"uuid": "static-test",

"onError": "ignore",

"webhookTimeout": 10,

}

Read more on customization.

Pepr Store Custom Resource

If you need to read all store keys, or you think the PeprStore is malfunctioning, you can check the PeprStore CR:

kubectl get peprstore -n pepr-system -o yaml

You should run in npx pepr dev mode to debug the issue, see the Debugging During Module Development section for more information.

Deployment

Production environment deployments should be declarative in order to avoid mistakes. The Pepr modules should be generated with npx pepr build and moved into the appropriate location.

Development environment deployments can use npx pepr deploy to deploy Pepr’s Kubernetes manifests into the cluster or npx pepr dev to active debug the Pepr module with breakpoints in the code editor.

Keep Modules Small

Modules are minified and built JavaScript files that are stored in a Kubernetes Secret in the cluster. The Secret is mounted in the Pepr Pod and is processed by Pepr Core. Due to the nature of the module being packaged in a Secret, it is recommended to keep the modules as small as possible to avoid hitting the 1MB limit of secrets.

Recommendations for keeping modules small are:

- Don’t repeat yourself

- Only import the part of the library modules that you need

It is suggested to lint and format your modules using npx pepr format.

Monitoring

Pepr can monitor Mutations and Validations from Admission Controller the through the npx pepr monitor [module-uuid] command. This command will display neatly formatted log showing approved and rejected Validations as well as the Mutations. If [module-uuid] is not supplied, then it uses all Pepr admission controller logs as the data source. If you are unsure of what modules are currently deployed, issue npx pepr uuid to display the modules and their descriptions.

✅ MUTATE pepr-demo/pepr-demo (50c5d836-335e-4aa5-8b56-adecb72d4b17)

✅ VALIDATE pepr-demo/example-2 (01c1d044-3a33-4160-beb9-01349e5d7fea)

❌ VALIDATE pepr-demo/example-evil-cm (8ee44ca8-845c-4845-aa05-642a696b51ce)

[ 'No evil CM annotations allowed.' ]

Multiple Modules or Multiple Capabilities

Each module has it’s own Mutating, Validating webhook configurations, Admission and Watch Controllers and Stores. This allows for each module to be deployed independently of each other. However, creating multiple modules creates overhead on the kube-apiserver, and the cluster.

Due to the overhead costs, it is recommended to deploy multiple capabilities that share the same resources (when possible). This will simplify analysis of which capabilities are responsible for changes on resources.

However, there are some cases where multiple modules makes sense. For instance different teams owning separate modules, or one module for Validations and another for Mutations. If you have a use-case where you need to deploy multiple modules it is recommended to separate concerns by operating in different namespaces.

OnSchedule

OnSchedule is supported by a PeprStore to safeguard against schedule loss following a pod restart. It is utilized at the top level, distinct from being within a Validate, Mutate, Reconcile or Watch. Recommended intervals are 30 seconds or longer, and jobs are advised to be idempotent, meaning that if the code is applied or executed multiple times, the outcome should be the same as if it had been executed only once. A major use-case for OnSchedule is day 2 operations.

Security

To enhance the security of your Pepr Controller, we recommend following these best practices:

- Regularly update Pepr to the latest stable release.

- Secure Pepr through RBAC using scoped mode taking into account access to the Kubernetes API server needed in the callbacks.

- Practice the principle of least privilege when assigning roles and permissions and avoid giving the service account more permissions than necessary.

- Use NetworkPolicy to restrict traffic from Pepr Controllers to the minimum required.

- Limit calls from Pepr to the Kubernetes API server to the minimum required.

- Set webhook failure policies to

Fail to ensure that the request is rejected if the webhook fails. More Below..

When using Pepr as a Validating Webhook, it is recommended to set the Webhook’s failurePolicy to Fail. This can be done in your Pepr module in thevalues.yaml file of the helm chart by setting admission.failurePolicy to Fail or in the package.json under pepr by setting the onError flag to reject, then running npx pepr build again.

By following these best practices, you can help protect your Pepr Controller from potential security threats.

Reconcile

Fills a similar niche to .Watch() – and runs in the Watch Controller – but it employs a Queue to force sequential processing of resource states once they are returned by the Kubernetes API. This allows things like operators to handle bursts of events without overwhelming the system or the Kubernetes API. It provides a mechanism to back off when the system is under heavy load, enhancing overall stability and maintaining the state consistency of Kubernetes resources, as the order of operations can impact the final state of a resource. For example, creating and then deleting a resource should be processed in that exact order to avoid state inconsistencies.

When(WebApp)

.IsCreatedOrUpdated()

.Validate(validator)

.Reconcile(async instance => {

// Do work here

Pepr Store

The store is backed by ETCD in a PeprStore resource, and updates happen at 5-second intervals when an array of patches is sent to the Kubernetes API Server. The store is intentionally not designed to be transactional; instead, it is built to be eventually consistent, meaning that the last operation within the interval will be persisted, potentially overwriting other operations. Changes to the data are made without a guarantee that they will occur simultaneously, so caution is needed in managing errors and ensuring consistency.

Watch

Pepr streamlines the process of receiving timely change notifications on resources by employing the Watch mechanism. It is advisable to opt for Watch over Mutate or Validate when dealing with more extended operations, as Watch does not face any timeout limitations. Additionally, Watch proves particularly advantageous for monitoring previously existing resources within a cluster. One compelling scenario for leveraging Watch is when there is a need to chain API calls together, allowing Watch operations to be sequentially executed following Mutate and Validate actions.

When(a.Pod)

.IsCreated()

.InNamespace("my-app")

.WithName("database")

.Mutate(pod => // .... )

.Validate(pod => // .... )

.Watch(async (pod, phase) => {

Log.info({pod}, `Pod was ${phase}.`);

// do consecutive api calls

4 -

User Guide

In this section you can find detailed information about Pepr and how to use it.

Sections

You can find the following information in this section:

4.1 -

Pepr CLI

npx pepr

Type safe K8s middleware for humans

Options:

-V, --version - output the version number--features <features> - Comma-separated feature flags (feature=value,feature2=value2)-h, --help - display help for command

Commands:

crd Scaffold and generate Kubernetes CRDs from structured TypeScript definitions

init [options] Initialize a new Pepr Module

build [options] Build a Pepr Module for deployment

deploy [options] Deploy a Pepr Module

dev [options] Setup a local webhook development environment

update [options] Update this Pepr module. Not recommended for prod as it may change files.

format [options] Lint and format this Pepr module

monitor [module-uuid] Monitor a Pepr Module

uuid [uuid] Module UUID(s) currently deployed in the cluster

kfc [options] [args…] Execute Kubernetes Fluent Client commands

npx pepr build

Build a Pepr Module for deployment.

Options:

-M, --rbac-mode <mode> - Override module config and set RBAC mode. (choices: “admin”, “scoped”)-I, --registry-info <registry/username> - Provide the image registry and username for building and pushing a custom WASM container. Requires authentication. Conflicts with –custom-image and –registry. Builds and pushes '<registry/username>/custom-pepr-controller:<current-version>'.-P, --with-pull-secret <name> - Use image pull secret for controller Deployment. (default: “”)-c, --custom-name <name> - Set name for zarf component and service monitors in helm charts.-e, --entry-point <file> - Specify the entry point file to build with. (default: pepr.ts)-i, --custom-image <image> - Specify a custom image with version for deployments. Conflicts with –registry-info and –registry. Example: ‘docker.io/username/custom-pepr-controller:v1.0.0’-n, --no-embed - Disable embedding of deployment files into output module. Useful when creating library modules intended solely for reuse/distribution via NPM.-o, --output <directory> - Set output directory. (default: “dist”)-r, --registry <registry> - Container registry: Choose container registry for deployment manifests. Conflicts with –custom-image and –registry-info. (choices: “GitHub”, “Iron Bank”)-t, --timeout <seconds> - How long the API server should wait for a webhook to respond before treating the call as a failure.-z, --zarf <manifest|chart> - Set Zarf package type (choices: “manifest”, “chart”, default: “manifest”)-h, --help - display help for command

Create a zarf.yaml and K8s manifest for the current module. This includes everything needed to deploy Pepr and the current module into production environments.

npx pepr crd

Scaffold and generate Kubernetes CRDs from structured TypeScript definitions.

Options:

-h, --help - display help for command

Commands:

create [options] Create a new CRD TypeScript definition

generate [options] Generate CRD manifests from TypeScript definitions stored in ‘api/’ of the current directory.

help [command] display help for command

npx pepr crd create

Create a new CRD TypeScript definition.

Options:

-S, --scope <scope> - Whether the resulting custom resource is cluster- or namespace-scoped (choices: “Namespaced”, “Cluster”, default: “Namespaced”)-d, --domain <domain> - Optional domain for CRD (e.g. pepr.dev) (default: “pepr.dev”)-g, --group <group> - API group (e.g. cache)-k, --kind <kind> - Kind name (e.g. memcached)-p, --plural <plural> - Plural name for CRD (e.g. memcacheds)-s, --short-name <name> - Short name for CRD (e.g. mc)-v, --version <version> - API version (e.g. v1alpha1)-h, --help - display help for command

npx pepr crd generate

Generate CRD manifests from TypeScript definitions stored in ‘api/’ of the current directory.

Options:

-o, --output <directory> - Output directory for generated CRDs (default: “./crds”)-h, --help - display help for command

npx pepr deploy

Deploy the current module into a Kubernetes cluster, useful for CI systems. Not recommended for production use.

Options:

-E, --docker-email <email> - Email for Docker registry.-P, --docker-password <password> - Password for Docker registry.-S, --docker-server <server> - Docker server address.-U, --docker-username <username> - Docker registry username.-f, --force - Force deploy the module, override manager field.-i, --image <image> - Override the image tag.-p, --pull-secret <name> - Deploy imagePullSecret for Controller private registry.-y, --yes - Skip confirmation prompts.-h, --help - display help for command

npx pepr dev

Setup a local webhook development environment

Options:

-H, --host <host> - Host to listen on (default: “host.k3d.internal”)-y, --yes - Skip confirmation prompt-h, --help - display help for command

Connect a local cluster to a local version of the Pepr Controller to do real-time debugging of your module. Note the npx pepr dev assumes a K3d cluster is running by default. If you are working with Kind or another docker-based K8s distro, you will need to pass the --host host.docker.internal option to npx pepr dev. If working with a remote cluster you will have to give Pepr a host path to your machine that is reachable from the K8s cluster.

NOTE: This command, by necessity, installs resources into the cluster you run it against. Generally, these resources are removed once the pepr dev session ends but there are two notable exceptions:

- the

pepr-system namespace, and - the

PeprStore CRD.

These can’t be auto-removed because they’re global in scope & doing so would risk wrecking any other Pepr deployments that are already running in-cluster. If (for some strange reason) you’re not pepr dev-ing against an ephemeral dev cluster and need to keep the cluster clean, you’ll have to remove these hold-overs yourself (or not)!

Lint and format this Pepr module.

Options:

-v, --validate-only - Do not modify files, only validate formatting.-h, --help - display help for command

npx pepr init

Initialize a new Pepr Module.

Options:

-d, --description <string> - Explain the purpose of the new module.-e, --error-behavior <behavior> - Set an error behavior. (choices: “audit”, “ignore”, “reject”)-n, --name <string> - Set the name of the new module.-s, --skip-post-init - Skip npm install, git init, and VSCode launch.-u, --uuid <string> - Unique identifier for your module with a max length of 36 characters.-y, --yes - Skip verification prompt when creating a new module.-h, --help - display help for command

npx pepr kfc

Execute a kubernetes-fluent-client command. This command is a wrapper around kubernetes-fluent-client.

Options:

-y, --yes - Skip confirmation prompt.-h, --help - display help for command

Usage:

npx pepr kfc [options] [command]

If you are unsure of what commands are available, you can run npx pepr kfc to see the available commands.

For example, to generate usable types from a Kubernetes CRD, you can run npx pepr kfc crd [source] [directory]. This will generate the types for the [source] CRD and output the generated types to the [directory].

You can learn more about the kubernetes-fluent-client here.

npx pepr monitor

Monitor Validations for a given Pepr Module or all Pepr Modules.

Options:

-h, --help - display help for command

Usage:

npx pepr monitor [options] [module-uuid]

Options:

-h, --help - display help for command

npx pepr update

Update the current Pepr Module to the latest SDK version. This command is not recommended for production use, instead, we recommend Renovate or Dependabot for automated updates.

Options:

-s, --skip-template-update - Do not update template files-y, --yes - Skip confirmation prompt-h, --help - display help for command

npx pepr uuid

Module UUID(s) currently deployed in the cluster with their descriptions. [uuid] represents a specific module uuid in the cluster.

Options:

-h, --help - display help for command

4.2 -

Pepr SDK

To use, import the sdk from the pepr package:

import { sdk } from "pepr";

containers

Returns list of all containers in a pod. Accepts the following parameters:

- @param peprValidationRequest The request/pod to get the containers from

- @param containerType The type of container to get

Usage:

Get all containers

const { containers } = sdk;

let result = containers(peprValidationRequest)

Get only the standard containers

const { containers } = sdk;

let result = containers(peprValidationRequest, "containers")

Get only the init containers

const { containers } = sdk;

let result = containers(peprValidationRequest, "initContainers")

Get only the ephemeral containers

const { containers } = sdk;

let result = containers(peprValidationRequest, "ephemeralContainers")

getOwnerRefFrom

Returns the owner reference for a Kubernetes resource as an array. Accepts the following parameters:

- @param kubernetesResource: GenericKind The Kubernetes resource to get the owner reference for

- @param blockOwnerDeletion: boolean If true, AND if the owner has the “foregroundDeletion” finalizer, then the owner cannot be deleted from the key-value store until this reference is removed.

- @param controller: boolean If true, this reference points to the managing controller.

Usage:

const { getOwnerRefFrom } = sdk;

const ownerRef = getOwnerRefFrom(kubernetesResource);

writeEvent

Write a K8s event for a CRD. Accepts the following parameters:

- @param kubernetesResource: GenericKind The Kubernetes resource to write the event for

- @param event The event to write, should contain a human-readable message for the event

- @param options Configuration options for the event.

- eventType: string – The type of event to write, for example “Warning”

- eventReason: string – The reason for the event, for example “ReconciliationFailed”

- reportingComponent: string – The component that is reporting the event, for example “uds.dev/operator”

- reportingInstance: string – The instance of the component that is reporting the event, for example process.env.HOSTNAME

Usage:

const { writeEvent } = sdk;

const event = { message: "Resource was created." };

writeEvent(kubernetesResource, event, {

eventType: "Info",

eventReason: "ReconciliationSuccess",

reportingComponent: "uds.dev/operator",

reportingInstance: process.env.HOSTNAME,

});

sanitizeResourceName

Returns a sanitized resource name to make the given name a valid Kubernetes resource name. Accepts the following parameter:

- @param resourceName The name of the resource to sanitize

Usage:

const { sanitizeResourceName } = sdk;

const sanitizedResourceName = sanitizeResourceName(resourceName)

See Also

Looking for information on the Pepr mutate helpers? See Mutate Helpers for information on those.

4.3 -

Pepr Modules

What is a Pepr Module?

A Pepr Module is a collection of capabilities, config and scaffolding in a Pepr Project. To create a module use the npx pepr init command.

4.4 -

Pepr Capabilities

A capability is set of related actions that work together to achieve a specific transformation or operation on Kubernetes resources. Capabilities are user-defined and can include one or more actions. They are defined within a Pepr module and can be used in both MutatingWebhookConfigurations and ValidatingWebhookConfigurations. A Capability can have a specific scope, such as mutating or validating, and can be reused in multiple Pepr modules.

When you npx pepr init, a capabilities directory is created for you. This directory is where you will define your capabilities. You can create as many capabilities as you need, and each capability can contain one or more actions. Pepr also automatically creates a HelloPepr capability with a number of example actions to help you get started.

Creating a Capability

Defining a new capability can be done via a VSCode Snippet generated during npx pepr init.

Create a new file in the capabilities directory with the name of your capability. For example, capabilities/my-capability.ts.

Open the new file in VSCode and type create in the file. A suggestion should prompt you to generate the content from there.

[)

If you prefer not to use VSCode, you can also modify or copy the HelloPepr capability to meet your needs instead.

Reusable Capabilities

Pepr has an NPM org managed by Defense Unicorns, @pepr, where capabilities are published for reuse in other Pepr Modules. You can find a list of published capabilities here.

You also can publish your own Pepr capabilities to NPM and import them. A couple of things you’ll want to be aware of when publishing your own capabilities:

Reuseable capability versions should use the format 0.x.x or 0.12.x as examples to determine compatibility with other reusable capabilities. Before 1.x.x, we recommend binding to 0.x.x if you can for maximum compatibility.

pepr.ts will still be used for local development, but you’ll also need to publish an index.ts that exports your capabilities. When you build & publish the capability to NPM, you can use npx pepr build --entry-point index.ts to generate the code needed for reuse by other Pepr modules.

4.5 -

Custom Resources

Importing Custom Resources

The Kubernetes Fluent Client supports the creation of TypeScript typings directly from Kubernetes Custom Resource Definitions (CRDs). The files it generates can be directly incorporated into Pepr capabilities and provide a way to work with strongly-typed CRDs.

For example (below), Istio CRDs can be imported and used as though they were intrinsic Kubernetes resources.

Generating TypeScript Types from CRDs

Using the kubernetes-fluent-client to produce a new type looks like this:

npx kubernetes-fluent-client crd [source] [directory]

The crd command expects a [source], which can be a URL or local file containing the CustomResourceDefinition(s), and a [directory] where the generated code will live.

The following example creates types for the Istio CRDs:

user@workstation$ npx kubernetes-fluent-client crd https://raw.githubusercontent.com/istio/istio/master/manifests/charts/base/crds/crd-all.gen.yaml crds

Attempting to load https://raw.githubusercontent.com/istio/istio/master/manifests/charts/base/crds/crd-all.gen.yaml as a URL

- Generating extensions.istio.io/v1alpha1 types for WasmPlugin

- Generating networking.istio.io/v1alpha3 types for DestinationRule

- Generating networking.istio.io/v1beta1 types for DestinationRule

- Generating networking.istio.io/v1alpha3 types for EnvoyFilter

- Generating networking.istio.io/v1alpha3 types for Gateway

- Generating networking.istio.io/v1beta1 types for Gateway

- Generating networking.istio.io/v1beta1 types for ProxyConfig

- Generating networking.istio.io/v1alpha3 types for ServiceEntry

- Generating networking.istio.io/v1beta1 types for ServiceEntry

- Generating networking.istio.io/v1alpha3 types for Sidecar

- Generating networking.istio.io/v1beta1 types for Sidecar

- Generating networking.istio.io/v1alpha3 types for VirtualService

- Generating networking.istio.io/v1beta1 types for VirtualService

- Generating networking.istio.io/v1alpha3 types for WorkloadEntry

- Generating networking.istio.io/v1beta1 types for WorkloadEntry

- Generating networking.istio.io/v1alpha3 types for WorkloadGroup

- Generating networking.istio.io/v1beta1 types for WorkloadGroup

- Generating security.istio.io/v1 types for AuthorizationPolicy

- Generating security.istio.io/v1beta1 types for AuthorizationPolicy

- Generating security.istio.io/v1beta1 types for PeerAuthentication

- Generating security.istio.io/v1 types for RequestAuthentication

- Generating security.istio.io/v1beta1 types for RequestAuthentication

- Generating telemetry.istio.io/v1alpha1 types for Telemetry

✅ Generated 23 files in the istio directory

Observe that the kubernetes-fluent-client has produced the TypeScript types within the crds directory. These types can now be utilized in the Pepr module.

user@workstation$ cat crds/proxyconfig-v1beta1.ts

// This file is auto-generated by kubernetes-fluent-client, do not edit manually

import { GenericKind, RegisterKind } from "kubernetes-fluent-client";

export class ProxyConfig extends GenericKind {

/**

* Provides configuration for individual workloads. See more details at:

* https://istio.io/docs/reference/config/networking/proxy-config.html

*/

spec?: Spec;

status?: { [key: string]: any };

}

/**

* Provides configuration for individual workloads. See more details at:

* https://istio.io/docs/reference/config/networking/proxy-config.html

*/

export interface Spec {

/**

* The number of worker threads to run.

*/

concurrency?: number;

/**

* Additional environment variables for the proxy.

*/

environmentVariables?: { [key: string]: string };

/**

* Specifies the details of the proxy image.

*/

image?: Image;

/**

* Optional.

*/

selector?: Selector;

}

/**

* Specifies the details of the proxy image.

*/

export interface Image {

/**

* The image type of the image.

*/

imageType?: string;

}

/**

* Optional.

*/

export interface Selector {

/**

* One or more labels that indicate a specific set of pods/VMs on which a policy should be

* applied.

*/

matchLabels?: { [key: string]: string };

}

RegisterKind(ProxyConfig, {

group: "networking.istio.io",

version: "v1beta1",

kind: "ProxyConfig",

});

Using new types

The generated types can be imported into Pepr directly, there is no additional logic needed to make them to work.

import { Capability, K8s, Log, a, kind } from "pepr";

import { Gateway } from "../crds/gateway-v1beta1";

import {

PurpleDestination,

VirtualService,

} from "../crds/virtualservice-v1beta1";

export const IstioVirtualService = new Capability({

name: "istio-virtual-service",

description: "Generate Istio VirtualService resources",

});

// Use the 'When' function to create a new action

const { When, Store } = IstioVirtualService;

// Define the configuration keys

enum config {

Gateway = "uds/istio-gateway",

Host = "uds/istio-host",

Port = "uds/istio-port",

Domain = "uds/istio-domain",

}

// Define the valid gateway names

const validGateway = ["admin", "tenant", "passthrough"];

// Watch Gateways to get the HTTPS domain for each gateway

When(Gateway)

.IsCreatedOrUpdated()

.WithLabel(config.Domain)

.Watch(vs => {

// Store the domain for the gateway

Store.setItem(vs.metadata.name, vs.metadata.labels[config.Domain]);

});

4.6 -

Customization

This document outlines how to customize the build output through Helm overrides and package.json configurations.

Redact Store Values from Logs

By default, the store values are displayed in logs, to redact them you can set the PEPR_STORE_REDACT_VALUES environment variable to true in the package.json file or directly on the Watcher or Admission Deployment. The default value is undefined.

{

"env": {

"PEPR_STORE_REDACT_VALUES": "true"

}

}

Display Node Warnings

You can display warnings in the logs by setting the PEPR_NODE_WARNINGS environment variable to true in the package.json file or directly on the Watcher or Admission Deployment. The default value is undefined.

{

"env": {

"PEPR_NODE_WARNINGS": "true"

}

}

The log format can be customized by setting the PINO_TIME_STAMP environment variable in the package.json file or directly on the Watcher or Admission Deployment. The default value is a partial JSON timestamp string representation of the time. If set to iso, the timestamp is displayed in an ISO format.

Caution: attempting to format time in-process will significantly impact logging performance.

{

"env": {

"PINO_TIME_STAMP": "iso"

}

}

With ISO:

{"level":30,"time":"2024-05-14T14:26:03.788Z","pid":16,"hostname":"pepr-static-test-7f4d54b6cc-9lxm6","method":"GET","url":"/healthz","status":200,"duration":"1 ms"}

Default (without):

{"level":30,"time":"1715696764106","pid":16,"hostname":"pepr-static-test-watcher-559d94447f-xkq2h","method":"GET","url":"/healthz","status":200,"duration":"1 ms"}

Customizing Watch Configuration

The Watch configuration is a part of the Pepr module that allows you to watch for specific resources in the Kubernetes cluster. The Watch configuration can be customized by specific enviroment variables of the Watcher Deployment and can be set in the field in the package.json or in the helm values.yaml file.

| Field | Description | Example Values |

|---|

PEPR_RESYNC_FAILURE_MAX | The maximum number of times to fail on a resync interval before re-establishing the watch URL and doing a relist. | default: "5" |

PEPR_RETRY_DELAY_SECONDS | The delay between retries in seconds. | default: "10" |

PEPR_LAST_SEEN_LIMIT_SECONDS | Max seconds to go without receiving a watch event before re-establishing the watch | default: "300" (5 mins) |

PEPR_RELIST_INTERVAL_SECONDS | Amount of seconds to wait before a relist of the watched resources | default: "600" (10 mins) |

Configuring Reconcile

The Reconcile Action allows you to maintain ordering of resource updates processed by a Pepr controller. The Reconcile configuration can be customized via environment variable on the Watcher Deployment, which can be set in the package.json or in the helm values.yaml file.

| Field | Description | Example Values |

|---|

PEPR_RECONCILE_STRATEGY | How Pepr should order resource updates being Reconcile()’d. | default: "kind" |

| Available Options | |

|---|

kind | separate queues of events for Reconcile()’d resources of a kind |

kindNs | separate queues of events for Reconcile()’d resources of a kind, within a namespace |

kindNsName | separate queues of events for Reconcile()’d resources of a kind, within a namespace, per name |

global | a single queue of events for all Reconcile()’d resources |

Customizing with Helm

Below are the available Helm override configurations after you have built your Pepr module that you can put in the values.yaml.

Helm Overrides Table

| Parameter | Description | Example Values |

|---|

additionalIgnoredNamespaces | Namespaces to ignore in addition to alwaysIgnore.namespaces from Pepr config in package.json. | - pepr-playground |

secrets.apiToken | Kube API-Server Token. | Buffer.from(apiToken).toString("base64") |

hash | Unique hash for deployment. Do not change. | <your_hash> |

namespace.annotations | Namespace annotations | {} |

namespace.labels | Namespace labels | {"pepr.dev": ""} |

uuid | Unique identifier for the module | hub-operator |

admission.* | Admission controller configurations | Various, see subparameters below |

watcher.* | Watcher configurations | Various, see subparameters below |

Admission and Watcher Subparameters

| Subparameter | Description |

|---|

failurePolicy | Webhook failure policy [Ignore, Fail] |

webhookTimeout | Timeout seconds for webhooks [1 - 30] |

env | Container environment variables |

image | Container image |

annotations | Deployment annotations |

labels | Deployment labels |

securityContext | Pod security context |

readinessProbe | Pod readiness probe definition |

livenessProbe | Pod liveness probe definition |

resources | Resource limits |

containerSecurityContext | Container’s security context |

nodeSelector | Node selection constraints |

tolerations | Tolerations to taints |

affinity | Node scheduling options |

terminationGracePeriodSeconds | Optional duration in seconds the pod needs to terminate gracefully |

Note: Replace * within admission.* or watcher.* to apply settings specific to the desired subparameter (e.g. admission.failurePolicy).

Customizing with package.json

Below are the available configurations through package.json.

package.json Configurations Table

| Field | Description | Example Values |

|---|

uuid | Unique identifier for the module | hub-operator |

onError | Behavior of the webhook failure policy | audit, ignore, reject |

webhookTimeout | Webhook timeout in seconds | 1 - 30 |

customLabels | Custom labels for namespaces | {namespace: {}} |

alwaysIgnore | Conditions to always ignore | {namespaces: []} |

admission | admission namespaces to always ignore | {alwaysIgnore: {namespaces: []}} |

watch | watcher namespaces to always ignore | {alwaysIgnore: {namespaces: []}} |

includedFiles | For working with WebAssembly | [“main.wasm”, “wasm_exec.js”] |

env | Environment variables for the container | {LOG_LEVEL: "warn"} |

rbac | Custom RBAC rules (requires building with rbacMode: scoped) | [{"apiGroups": ["<apiGroups>"], "resources": ["<resources>"], "verbs": ["<verbs>"]}] |

rbacMode | Configures module to build binding RBAC with principal of least privilege | scoped, admin |

additionalWebhooks | Additional webhooks configuration | [{"failurePolicy": "Fail", "namespace": "example-namespace"}] |

admission.alwaysIgnore && watcher.alwaysIgnore: These configurations cannot be used with the global alwaysIgnore field. They are used to specify namespaces that should always be ignored by the admission controller or watcher, respectively.

uuid: An identifier for the module in the pepr-system namespace. If not provided, a UUID will be generated. It can be any kubernetes acceptable name that is under 36 characters.

These tables provide a comprehensive overview of the fields available for customization within the Helm overrides and the package.json file. Modify these according to your deployment requirements.

Example Custom RBAC Rules

The following example demonstrates how to add custom RBAC rules to the Pepr module.

{

"pepr": {

"rbac": [

{

"apiGroups": ["pepr.dev"],

"resources": ["customresources"],

"verbs": ["get", "list"]

},

{

"apiGroups": ["apps"],

"resources": ["deployments"],

"verbs": ["create", "delete"]

}

]

}

}

4.7 -

Feature Flags

Feature flags allow you to enable, disable, or configure features in Pepr.

They provide a way to manage feature rollouts, toggle experimental features, and maintain configuration across different environments.

Feature flags work across both CLI and in-cluster environments, using the same underlying implementation.

Pepr’s feature flag system supports:

- Boolean flags

- String values

- Numeric values

- Default values for flags

- Type safety through TypeScript

- Configuration via environment variables

- Configuration via initialization strings

- Consistent behavior in both CLI and in-cluster environments

Best Practices

- Temporary Usage: Use feature flags for temporary changes, gradual rollouts, or experimental features. Do NOT use feature flags for permanent configuration.

- Descriptive Names: Choose descriptive flag names and provide detailed descriptions in the metadata.

- Default Values: Always provide sensible default values for feature flags.

- Type Safety: Leverage TypeScript’s type system when accessing feature flags.

- Cleanup: Remove feature flags that are no longer needed to maintain a clean codebase and stay within the feature flag limit.

Getting Started

Using Feature Flags

Enable a CLI feature flag via environment variable:

PEPR_FEATURE_REFERENCE_FLAG=true npx pepr@latest

Enable a CLI feature flag via argument:

npx pepr@latest --features="reference_flag=true"

Configure feature flags for a Pepr module:

Add to your package.json:

"pepr": {

"env": {

"PEPR_FEATURE_REFERENCE_FLAG": true

}

}

Defining Feature Flags

Feature flags in Pepr are defined in the FeatureFlags object.

The FeatureFlags object is the source of truth for all available feature flags.

Important: To reduce complexity, Pepr enforces a limit of 4 active feature flags.

Exceeding this limit results in an error (e.g., Error: Too many feature flags active: 5 (maximum: 4).)

Feature Flags have:

- A unique key used for programmatic access

- Metadata containing name, description, and default value

Feature flag values are converted to appropriate types:

"true" and "false" strings are converted to boolean values- Numeric strings are converted to numbers

- All other values remain as strings

Example definition:

export const FeatureFlags: Record<string, FeatureInfo> = {

REFERENCE_FLAG: {

key: "reference_flag",

metadata: {

name: "Reference Flag",

description: "A feature flag to show intended usage.",

defaultValue: false,

},

},

};

Configuration and Workflows

Feature flags are configured in two ways for either CLI or Module use.

Only feature flags defined in the FeatureFlags object can be configured.

Attempting to use an undefined flag will result in an error.

Pepr CLI Workflow

Using environment variables with the format PEPR_FEATURE_<FLAG_NAME>:

# Run Pepr with debug logging to verify feature flags

PEPR_FEATURE_REFERENCE_FLAG=true LOG_LEVEL=debug npx pepr@latest

Using the --features CLI argument:

LOG_LEVEL=debug npx pepr@latest --features="reference_flag=true"

Pepr Module Deployment Workflow

For deployed modules, configure feature flags in the module’s package.json:

"pepr": {

"env": {

"PEPR_FEATURE_REFERENCE_FLAG": true

}

},

Build and deploy the module once environment variables are set with a known $APP_NAME:

# Edit your package.json to include feature flags

jq '.pepr.env.PEPR_FEATURE_REFERENCE_FLAG = true' package.json

npx pepr@latest build

npx pepr@latest deploy -i pepr:dev

APP_NAME=pepr-module-name

kubectl logs -n pepr-system --selector app=$APP_NAME | grep "Feature flags store initialized"

Troubleshooting

Feature Flag Not Recognized

If your feature flag isn’t recognized:

- Verify the flag is defined in the

FeatureFlags object - Check the case of the environment variable (

PEPR_FEATURE_REFERENCE_FLAG vs pepr_feature_reference_flag) - Ensure correct format for CLI flags (

reference_flag=true not REFERENCE_FLAG=true)

Too Many Feature Flags

If you receive the error: Error: Too many feature flags active: 5 (maximum: 4)

- Review active feature flags using

featureFlagStore.getAll() - Remove or consolidate unnecessary feature flags

- Consider if some flags could be regular configuration instead

Flag Value Incorrect Type

If your feature flag has an unexpected type:

- Remember that strings

"true" and "false" are automatically converted to booleans - Numeric strings are automatically converted to numbers

- Use explicit type checking in your code:

typeof featureFlagStore.get(...)

For Maintainers

Development Guidelines

Accessing and Implementing Feature Flags

To add a new feature flag, update the FeatureFlags object in the source code.

Any feature flag not defined in this object cannot be used in the application.

Access feature flags the same way in both CLI and in-cluster environments using the featureFlagStore.

This snippet shows how to execute different code paths based upon a feature flag value.

To execute within the Pepr project, save the snippet to file.ts and run npx tsx file.ts.

import { featureFlagStore } from "./lib/features/store";

import { FeatureFlags } from "./lib/features/FeatureFlags";

// Get a boolean flag

const isReferenceEnabled = featureFlagStore.get(FeatureFlags.REFERENCE_FLAG.key);

const allFlags = featureFlagStore.getAll();

// Use feature flags for conditional logic

if (featureFlagStore.get(FeatureFlags.REFERENCE_FLAG.key)) {

Log.info(`Flag value: ${isReferenceEnabled} of type ${typeof featureFlagStore.get(FeatureFlags.REFERENCE_FLAG.key)}`)

} else {

Log.info(`All flags: ${JSON.stringify(allFlags)}`)

}

4.8 -

Pepr Filters

Filters are functions that take a AdmissionReview or Watch event and return a boolean. They are used to filter out resources that do not meet certain criteria. Filters are used in the package to filter out resources that are not relevant to the user-defined admission or watch process.

When(a.ConfigMap)

// This limits the action to only act on new resources.

.IsCreated()

// Namespace filter

.InNamespace("webapp")

// Name filter

.WithName("example-1")

// Label filter

.WithLabel("app", "webapp")

.WithLabel("env", "prod")

.Mutate(request => {

request

.SetLabel("pepr", "was-here")

.SetAnnotation("pepr.dev", "annotations-work-too");

});

Filters

.WithName("name"): Filters resources by name..WithNameRegex(/^pepr/): Filters resources by name using a regex..InNamespace("namespace"): Filters resources by namespace..InNamespaceRegex(/(.*)-system/): Filters resources by namespace using a regex..WithLabel("key", "value"): Filters resources by label. (Can be multiple).WithDeletionTimestamp(): Filters resources that have a deletion timestamp.

Notes:

WithDeletionTimestamp() is does not work on Delete through the Mutate or Validate methods because the Kubernetes Admission Process does not fire the DELETE event with a deletion timestamp on the resource.WithDeletionTimestamp() will match on an Update event during Admission (Mutate or Validate) when pending-deletion permitted changes (like removing a finalizer) occur.

4.9 -

Generating Custom Metrics

Pepr provides a metricCollector utility that lets you define custom metrics such as counters and gauges for your module.

For example, to track how often a specific event occurs, you can create a custom counter and gauge like this:

import { Capability, a, metricsCollector } from "pepr";

export const HelloPepr = new Capability({

name: "hello-pepr",

description: "An example using the metric collector.",

});

// Register a metric collector to count the number of times the hello-pepr label has been applied

metricsCollector.addCounter(

"label_counter",

"example counter for counting number of times hello-pepr label has been applied",

);

// Register a gauge to count how many times a given label has been applied

metricsCollector.addGauge(

"label_guage",

"example gauge for counting how times times a given label has been applied",

["label"],

);

const { When } = HelloPepr;

When(a.Pod)

.IsCreatedOrUpdated()

.Mutate(po => {

po.SetLabel("hello-pepr", "true");

po.SetLabel("blue", "true");

po.SetLabel("green", "true");

metricsCollector.incCounter("label_counter");

metricsCollector.incGauge("label_guage", { label: "blue" }, 1);

metricsCollector.incGauge("label_guage", { label: "green" }, 1);

metricsCollector.incGauge("label_guage", { label: "hello-pepr" }, 1);

});

You can access these metrics through the /metrics endpoint of your module. For example, if you are running npx pepr dev --yes, you can create some pods and then query the metrics like this:

terminal_a > npx pepr dev --yes

terminal_b > curl -k http://localhost:3000/metrics

...

# HELP pepr_label_counter example counter for counting number of times hello-pepr label has been applied

# TYPE pepr_label_counter counter

pepr_label_counter 0

# HELP pepr_label_guage example gauge for counting how times times a given label has been applied

# TYPE pepr_label_guage gauge

terminal_b > kubectl run a --image=nginx

terminal_b > kubectl run b --image=nginx

terminal_b > kubectl run c --image=nginx

terminal_b > curl -k http://localhost:3000/metrics

...

# TYPE pepr_label_counter counter

pepr_label_counter 3

# HELP pepr_label_guage example gauge for counting how times times a given label has been applied

# TYPE pepr_label_guage gauge

pepr_label_guage{label="blue"} 3

pepr_label_guage{label="green"} 3

pepr_label_guage{label="hello-pepr"} 3

4.10 -

Generating CRDs

Pepr comes with the ability to generate Kubernetes Custom Resource Definitions (CRDs) from TypeScript types. This feature is particularly useful for operator developers who are creating Operators that utilize CRDs.

To generate a TypeScript types for a CRD, you can use the pepr crd create command. This command allows you to specify the group, version, kind, short name, plural name, and scope of the CRD.

npx pepr crd create \

--group cache \

--version v1alpha1 \

--kind Memcache \

--short-name mc \

--plural memcaches \

--scope Namespaced

This command will create a TypeScript file in the crds directory with the generated types for the specified CRD. The generated file will include the necessary type definitions for the CRD.

Note: The comments in the interface spec and condition type become descriptions for the CRD properties, so you can add comments to your properties to provide additional context or documentation for the generated types.

After adding the required types for your CRD, you can then generate the CRD YAML file using the pepr crd generate command. This command will create a YAML file that defines the CRD in Kubernetes format.

You can control the output directory for the generated CRD YAML file by using the --output option. If not, it will be generated in the crds directory by default.

At this point, you can apply the generated CRD to your Kubernetes cluster using kubectl apply -f crds/your-crd-file.yaml.

> kubectl apply -f crds

customresourcedefinition.apiextensions.k8s.io/memcaches.cache.pepr.dev created

4.11 -

Metrics Endpoints

The /metrics endpoint provides metrics for the application that are collected via the MetricsCollector class. It uses the prom-client library and performance hooks from Node.js to gather and expose the metrics data in a format that can be scraped by Prometheus.

Metrics Exposed

The MetricsCollector exposes the following metrics:

pepr_errors: A counter that increments when an error event occurs in the application.pepr_alerts: A counter that increments when an alert event is triggered in the application.pepr_mutate: A summary that provides the observed durations of mutation events in the application.pepr_mutate_timeouts : A counter that increments when a webhook timeout occurs during mutation.pepr_validate: A summary that provides the observed durations of validation events in the application.pepr_validate_timeouts : A counter that increments when a webhook timeout occurs during validation.pepr_cache_miss: A gauge that provides the number of cache misses per window.pepr_resync_failure_count: A gauge that provides the number of unsuccessful attempts at receiving an event within the last seen event limit before re-establishing a new connection.

Environment Variables

| PEPR_MAX_CACHE_MISS_WINDOWS | Maximum number windows to emit pepr_cache_miss metrics for | default: Undefined |

API Details

Method: GET

URL: /metrics

Response Type: text/plain

Status Codes:

- 200 OK: On success, returns the current metrics from the application.

Response Body:

The response body is a plain text representation of the metrics data, according to the Prometheus exposition formats. It includes the metrics mentioned above.

Examples

Request

Response

`# HELP pepr_errors Mutation/Validate errors encountered

# TYPE pepr_errors counter

pepr_errors 5

# HELP pepr_alerts Mutation/Validate bad api token received

# TYPE pepr_alerts counter

pepr_alerts 10

# HELP pepr_mutate Mutation operation summary

# TYPE pepr_mutate summary

pepr_mutate{quantile="0.01"} 100.60707900021225

pepr_mutate{quantile="0.05"} 100.60707900021225

pepr_mutate{quantile="0.5"} 100.60707900021225

pepr_mutate{quantile="0.9"} 100.60707900021225

pepr_mutate{quantile="0.95"} 100.60707900021225

pepr_mutate{quantile="0.99"} 100.60707900021225

pepr_mutate{quantile="0.999"} 100.60707900021225

pepr_mutate_sum 100.60707900021225

pepr_mutate_count 1

# HELP pepr_validate Validation operation summary

# TYPE pepr_validate summary

pepr_validate{quantile="0.01"} 201.19413900002837

pepr_validate{quantile="0.05"} 201.19413900002837

pepr_validate{quantile="0.5"} 201.2137690000236

pepr_validate{quantile="0.9"} 201.23339900001884

pepr_validate{quantile="0.95"} 201.23339900001884

pepr_validate{quantile="0.99"} 201.23339900001884

pepr_validate{quantile="0.999"} 201.23339900001884

pepr_validate_sum 402.4275380000472

pepr_validate_count 2

# HELP pepr_cache_miss Number of cache misses per window

# TYPE pepr_cache_miss gauge

pepr_cache_miss{window="2024-07-25T11:54:33.897Z"} 18

pepr_cache_miss{window="2024-07-25T12:24:34.592Z"} 0

pepr_cache_miss{window="2024-07-25T13:14:33.450Z"} 22

pepr_cache_miss{window="2024-07-25T13:44:34.234Z"} 19

pepr_cache_miss{window="2024-07-25T14:14:34.961Z"} 0

# HELP pepr_resync_failure_count Number of retries per count

# TYPE pepr_resync_failure_count gauge

pepr_resync_failure_count{count="0"} 5

pepr_resync_failure_count{count="1"} 4

Prometheus Operator

If using the Prometheus Operator, the following ServiceMonitor example manifests can be used to scrape the /metrics endpoint for the admission and watcher controllers.

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: admission

spec:

selector:

matchLabels:

pepr.dev/controller: admission

namespaceSelector:

matchNames:

- pepr-system

endpoints:

- targetPort: 3000

scheme: https

tlsConfig:

insecureSkipVerify: true

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: watcher

spec:

selector:

matchLabels:

pepr.dev/controller: watcher

namespaceSelector:

matchNames:

- pepr-system

endpoints:

- targetPort: 3000

scheme: https

tlsConfig:

insecureSkipVerify: true

4.12 -

OnSchedule

The OnSchedule feature allows you to schedule and automate the execution of specific code at predefined intervals or schedules. This feature is designed to simplify recurring tasks and can serve as an alternative to traditional CronJobs. This code is designed to be run at the top level on a Capability, not within a function like When.

Best Practices

OnSchedule is designed for targeting intervals equal to or larger than 30 seconds due to the storage mechanism used to archive schedule info.

Usage

Create a recurring task execution by calling the OnSchedule function with the following parameters:

name - The unique name of the schedule.

every - An integer that represents the frequency of the schedule in number of units.

unit - A string specifying the time unit for the schedule (e.g., seconds, minute, minutes, hour, hours).

startTime - (Optional) A UTC timestamp indicating when the schedule should start. All date times must be provided in GMT. If not specified the schedule will start when the schedule store reports ready.

run - A function that contains the code you want to execute on the defined schedule.

completions - (Optional) An integer indicating the maximum number of times the schedule should run to completion. If not specified the schedule will run indefinitely.

Examples

Update a ConfigMap every 30 seconds:

OnSchedule({

name: "hello-interval",

every: 30,

unit: "seconds",

run: async () => {

Log.info("Wait 30 seconds and create/update a ConfigMap");

try {

await K8s(kind.ConfigMap).Apply({

metadata: {

name: "last-updated",

namespace: "default",

},

data: {

count: `${new Date()}`,

},

});

} catch (error) {

Log.error(error, "Failed to apply ConfigMap using server-side apply.");

}

},

});

Refresh an AWSToken every 24 hours, with a delayed start of 30 seconds, running a total of 3 times:

OnSchedule({

name: "refresh-aws-token",

every: 24,

unit: "hours",

startTime: new Date(new Date().getTime() + 1000 * 30),

run: async () => {

await RefreshAWSToken();

},

completions: 3,

});

Advantages

- Simplifies scheduling recurring tasks without the need for complex CronJob configurations.

- Provides flexibility to define schedules in a human-readable format.

- Allows you to execute code with precision at specified intervals.

- Supports limiting the number of schedule completions for finite tasks.

4.13 -

RBAC Modes

During the build phase of Pepr (npx pepr build --rbac-mode [admin|scoped]), you have the option to specify the desired RBAC mode through specific flags. This allows fine-tuning the level of access granted based on requirements and preferences.

Modes

admin

npx pepr build --rbac-mode admin

Description: The service account is given cluster-admin permissions, granting it full, unrestricted access across the entire cluster. This can be useful for administrative tasks where broad permissions are necessary. However, use this mode with caution, as it can pose security risks if misused. This is the default mode.

scoped

npx pepr build --rbac-mode scoped

Description: The service account is provided just enough permissions to perform its required tasks, and no more. This mode is recommended for most use cases as it limits potential attack vectors and aligns with best practices in security. The admission controller’s primary mutating or validating action doesn’t require a ClusterRole (as the request is not persisted or executed while passing through admission control), if you have a use case where the admission controller’s logic involves reading other Kubernetes resources or taking additional actions beyond just validating, mutating, or watching the incoming request, appropriate RBAC settings should be reflected in the ClusterRole. See how in Updating the ClusterRole.

Debugging RBAC Issues

If encountering unexpected behaviors in Pepr while running in scoped mode, check to see if they are related to RBAC.

- Check Deployment logs for RBAC errors:

kubectl logs -n pepr-system -l app | jq

# example output

{

"level": 50,

"time": 1697983053758,

"pid": 16,

"hostname": "pepr-static-test-watcher-745d65857d-pndg7",

"data": {

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "configmaps \"pepr-ssa-demo\" is forbidden: User \"system:serviceaccount:pepr-system:pepr-static-test\" cannot patch resource \"configmaps\" in API group \"\" in the namespace \"pepr-demo-2\"",

"reason": "Forbidden",

"details": {

"name": "pepr-ssa-demo",

"kind": "configmaps"

},

"code": 403

},

"ok": false,

"status": 403,

"statusText": "Forbidden",

"msg": "Dooes the ServiceAccount permissions to CREATE and PATCH this ConfigMap?"

}

- Verify ServiceAccount Permissions with

kubectl auth can-i

SA=$(kubectl get deploy -n pepr-system -o=jsonpath='{range .items[0]}{.spec.template.spec.serviceAccountName}{"\n"}{end}')

# Can i create configmaps as the service account in pepr-demo-2?

kubectl auth can-i create cm --as=system:serviceaccount:pepr-system:$SA -n pepr-demo-2

# example output: no

- Describe the ClusterRole

SA=$(kubectl get deploy -n pepr-system -o=jsonpath='{range .items[0]}{.spec.template.spec.serviceAccountName}{"\n"}{end}')

kubectl describe clusterrole $SA

# example output:

Name: pepr-static-test

Labels: <none>

Annotations: <none>

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----